We pulled 600 biomedical research articles from PubMed, all published in 2023, all with abstracts, spanning immunology, cell biology, and molecular biology. We then classified every abstract by structure, word count, readability, quantitative content, and language features, and checked citation counts as of April 2026, giving each paper 2–3 years to accumulate citations.

The goal: to find out which abstract features, if any, correlate with higher citation counts. The results were not what we expected.

Building on Prior Work

Research on how abstract characteristics affect citation outcomes is sparser than the equivalent literature on titles, but several important studies inform our approach:

Hartley (2004, 2014) conducted two comprehensive reviews of research on structured abstracts, finding that structured abstracts are judged more informative, readable, and useful by both authors and readers. However, his reviews focused on quality and comprehension rather than citation impact, the question of whether structured format translates to more citations was left open (doi:10.3163/1536-5050.102.3.002).

Falagas et al. (2013) analysed original studies from five major general medicine journals and found that article length and number of authors independently predicted citation counts, but abstract word count showed a weaker relationship (doi:10.1371/journal.pone.0049476). Their work raised the question of whether abstract-level features matter independently of the full paper.

Plavén-Sigray et al. (2017) documented a steady decline in the readability of scientific abstracts over time across 709,577 abstracts from 123 journals, suggesting growing use of specialist jargon (doi:10.7554/eLife.27725). This raised a natural question: does higher readability, bucking the trend, correlate with more citations? Our data tests this directly.

Paiva, Lima & Paiva (2012) found that articles with short titles describing results were cited more often, and noted that abstract characteristics interacted with title features in predicting impact (doi:10.6061/clinics/2012(05)17). Our study extends this by examining abstract features in isolation across a larger biomedical sample.

What the existing literature lacks is a direct comparison of structured vs. unstructured abstract format on citation outcomes, using recent biomedical data at scale. Most studies either review the quality of structured abstracts (do readers prefer them?) or examine other bibliometric factors alongside abstracts. Our analysis specifically asks: does abstract format, length, readability, or clinical framing predict citations in 600 biomedical papers from 2023?

Dataset: 600 PubMed articles (2023) across immunology, cell biology, and molecular biology

Source: NCBI E-Utilities API (esearch + efetch + elink)

Citation window: 2–3 years (citations counted April 2026)

Median citations: 4 | Mean: 7.3 | Range: 0–123

MeSH terms: immunology, cell biology, molecular biology. Article type: journal article. Language: English. Abstracts required.

1. Structured vs Unstructured: No Clear Winner

This was our biggest surprise. Structured abstracts, those with labelled sections like Background, Methods, Results, and Conclusions, are widely recommended by journals and writing guides. Many researchers assume this format helps readers and therefore drives more citations.

Our data does not support that assumption.

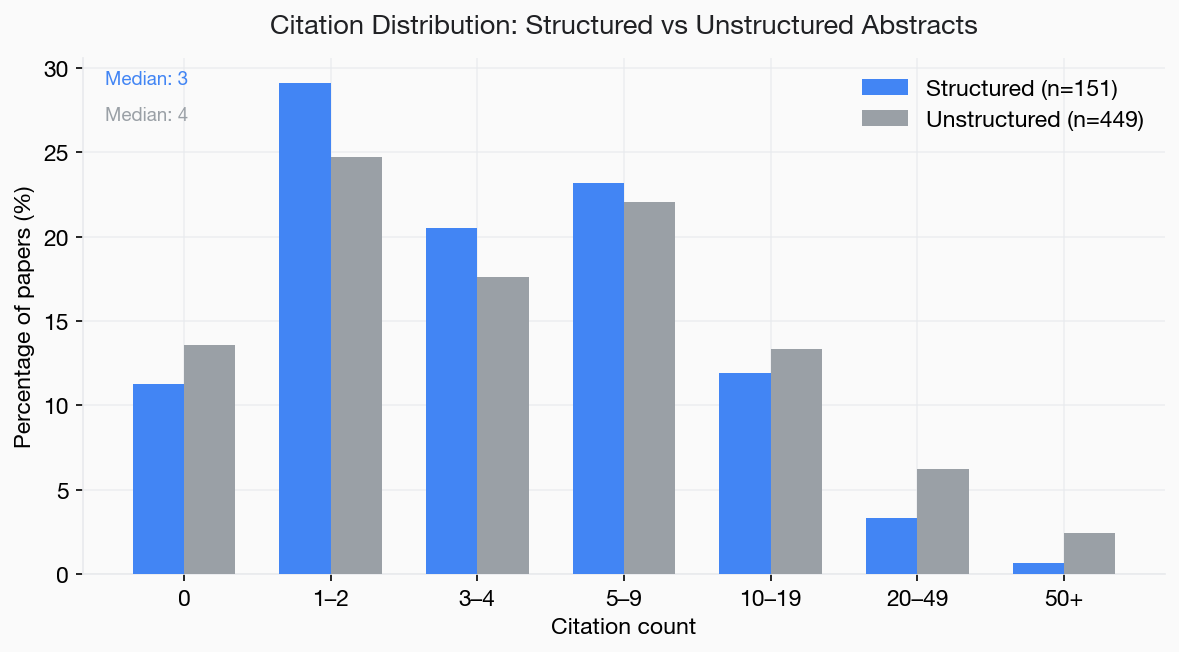

- Structured abstracts (n=151): median 3 citations

- Unstructured abstracts (n=449): median 4 citations

The 25% of papers with structured abstracts actually had a slightly lower median citation count than the 75% without. The difference is small and we would not read too much into it, but the key finding is the absence of an advantage. Structured format alone does not appear to boost discoverability or citation impact in this dataset.

Figure 1. Citation distribution for structured (n=151) and unstructured (n=449) abstracts. Structured abstracts show no citation advantage.

Structured abstracts did not outperform unstructured abstracts on citations. Follow your journal's format requirements, but do not assume that adding section labels will increase your citation count.

2. Abstract Length: A Sweet Spot Around 150–200 Words

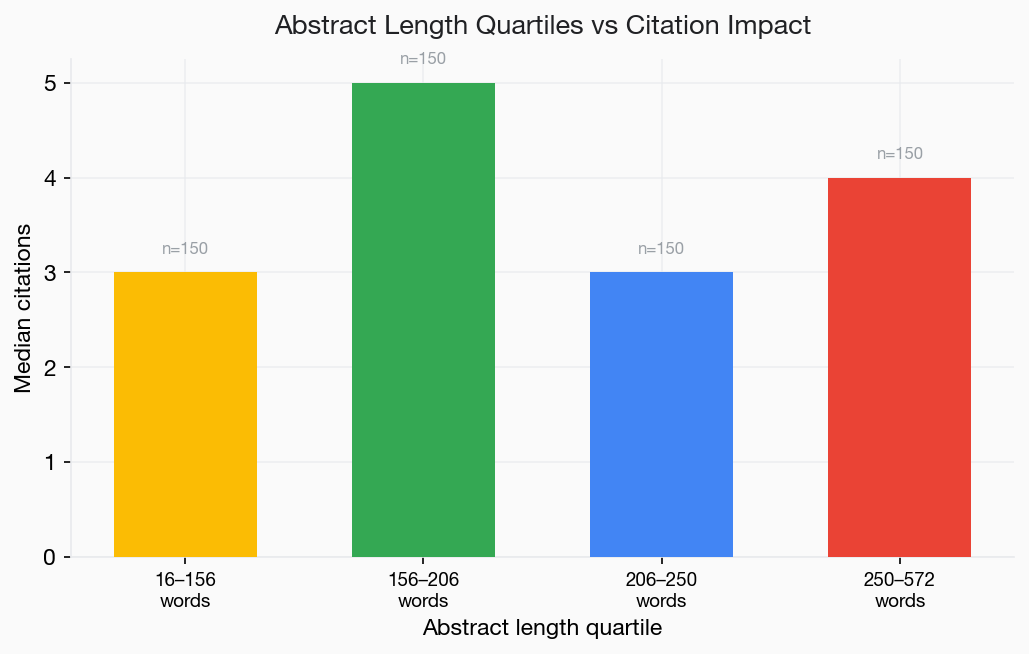

We divided the 600 papers into quartiles by abstract word count. The second quartile, abstracts between 156 and 206 words, had the highest median citations.

- Q1 (16–155 words): median 3 citations

- Q2 (156–206 words): median 5 citations

- Q3 (207–250 words): median 3 citations

- Q4 (251–572 words): median 4 citations

Very short abstracts (under 156 words) may lack sufficient detail for readers to assess the work. Very long abstracts do not appear to add a citation advantage, though they do not dramatically hurt either.

Figure 2. Median citations by abstract length quartile. The 156–206 word range shows the highest median (5 citations).

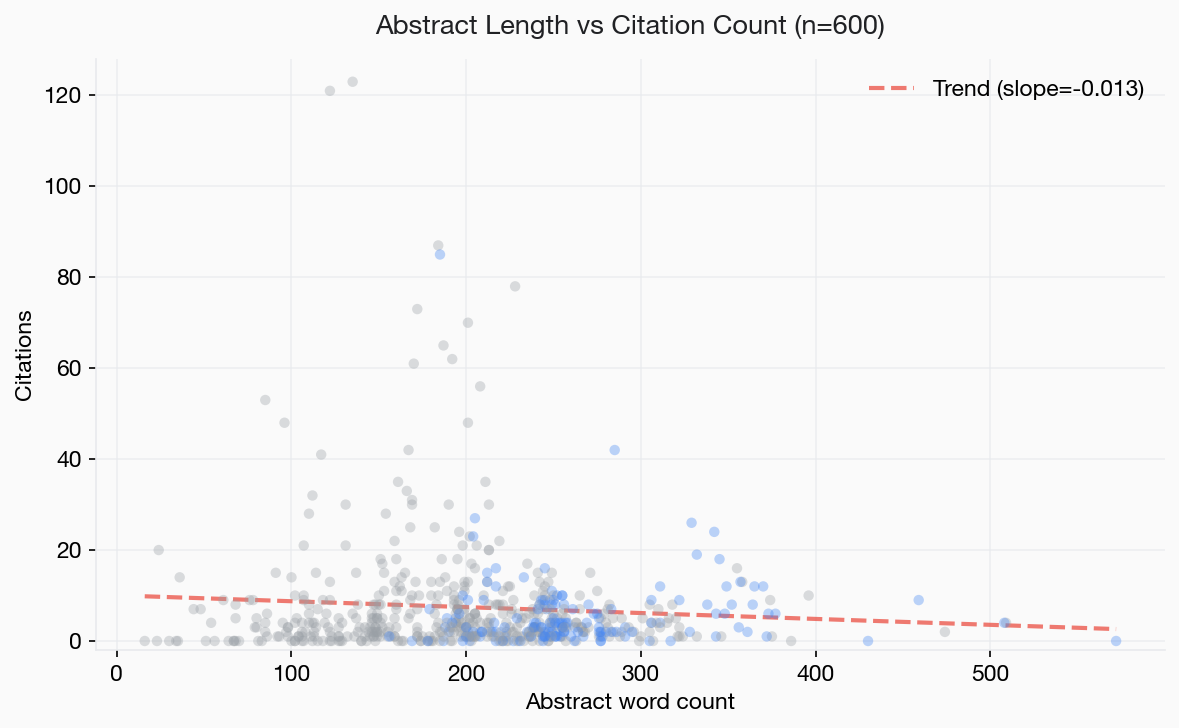

Figure 3. Abstract word count vs citations (n=600). Blue dots are structured abstracts, grey are unstructured. The trend line is nearly flat.

3. Clinical Relevance: The Strongest Signal

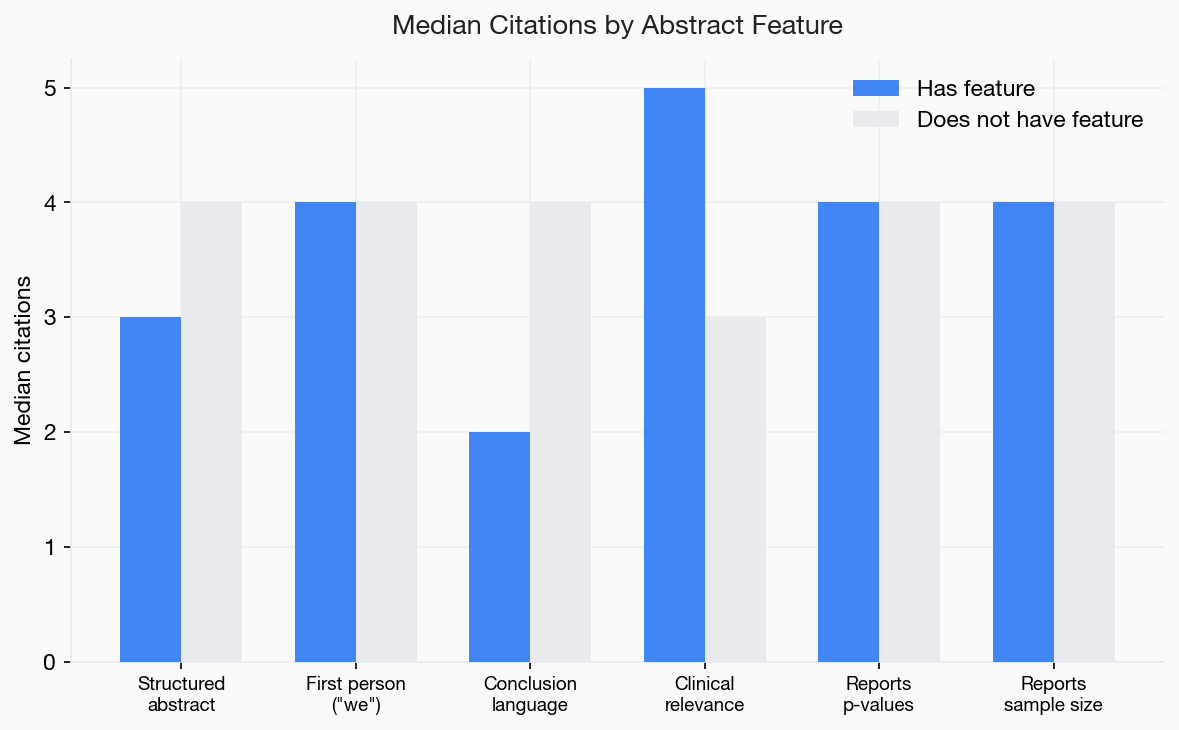

Of all the features we tested, clinical relevance language had the clearest correlation with higher citations. Abstracts that mentioned therapeutic targets, biomarkers, diagnostic potential, prognostic value, or patient outcomes had measurably higher citation counts.

- Clinical relevance language present (n=217): median 5 citations

- No clinical relevance language (n=383): median 3 citations

This is a 67% increase in median citations. It was the largest difference we observed across any binary feature.

The likely explanation is straightforward: clinical applicability broadens the audience for a paper beyond the immediate subfield. A cell biology paper that connects its findings to a therapeutic implication will attract interest from clinicians, pharmacologists, and translational researchers, not just cell biologists.

Figure 4. Median citations by abstract feature. Clinical relevance shows the largest gap between papers with and without the feature.

If your research has any clinical relevance, therapeutic targets, diagnostic potential, biomarker applications, patient outcomes, state it explicitly in the abstract. This was the single strongest correlate of higher citations in our dataset.

4. Quantitative Results in the Abstract

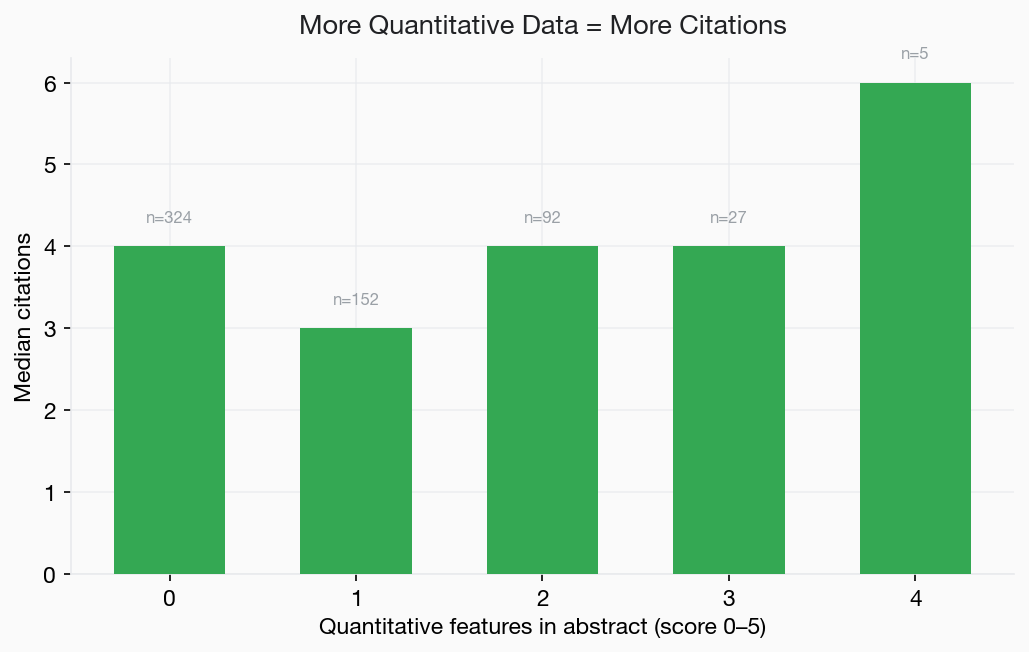

We scored each abstract on five quantitative features: reporting p-values, confidence intervals, percentages, sample sizes, and effect sizes (odds ratios, hazard ratios, fold changes). Each paper received a score from 0 to 5.

- Score 0 (n=324): median 4 citations

- Score 1 (n=152): median 3 citations

- Score 2 (n=92): median 4 citations

- Score 3 (n=27): median 4 citations

- Score 4 (n=5): median 6 citations

The relationship is weaker than we expected. Papers with the most quantitative reporting (score 4) had the highest median, but the sample size is small (n=5). Across the bulk of the data (scores 0–3), the medians are nearly identical.

Figure 5. Median citations by quantitative reporting score (0–5). The relationship is weak, with the exception of the small score-4 group.

This does not mean you should omit quantitative results. Reporting effect sizes, sample sizes, and p-values is good scientific practice and helps readers assess your findings at a glance. But our data suggests that quantitative reporting in the abstract is not a strong independent driver of citation counts.

5. Readability: Minimal Impact

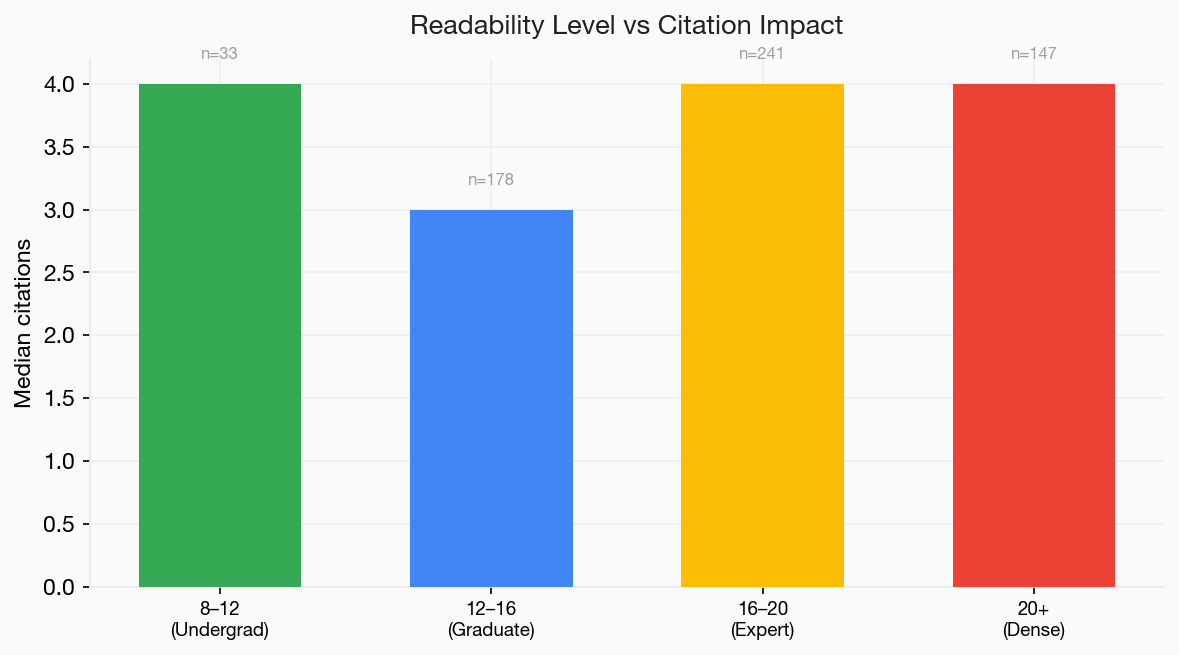

We measured readability using the Flesch-Kincaid grade level, which estimates the US school grade needed to understand the text. Higher grades mean more complex writing.

- FK 8–12 (undergraduate level): n=33, median 4 citations

- FK 12–16 (graduate level): n=178, median 3 citations

- FK 16–20 (expert level): n=241, median 4 citations

- FK 20+ (dense/specialist): n=147, median 4 citations

There is no meaningful readability gradient. Abstracts at all reading levels perform similarly. This contrasts with broader evidence on full-text readability and citations, and likely reflects the fact that biomedical abstracts are read primarily by domain specialists who are comfortable with technical language.

Figure 6. Readability level (Flesch-Kincaid grade) vs median citations. No clear advantage for more readable abstracts.

6. First Person and Conclusion Language

Two other features we tested showed no meaningful correlation:

First person ("we", "our"): 58% of abstracts used first person. Median citations were identical (4) regardless of voice. The old convention of avoiding first person appears to have no citation impact.

Explicit conclusion language ("these results suggest", "in conclusion", "taken together"): Only 8% of abstracts included such phrasing, and they actually had lower median citations (2 vs 4). This may be a confound, papers with weaker results may rely more heavily on interpretive framing, but it certainly does not support the advice to always include conclusion statements.

What Does This Mean for Your Next Paper?

The honest summary: most abstract features we tested had weak or no correlation with citations. The academic SEO advice that is commonly repeated, "use structured format", "write more readably", "include quantitative results", did not produce clear citation signals in our dataset of 600 papers.

What did emerge:

- State clinical relevance explicitly. This was the strongest and most actionable finding. If your work has therapeutic, diagnostic, or prognostic implications, say so in the abstract. A 67% increase in median citations is not trivial.

- Aim for 150–200 words. The data suggests a modest sweet spot. Long enough to convey your findings, short enough to hold attention.

- Follow your journal's format. Structured or unstructured, it does not appear to matter for citations. Do whatever your target journal requires.

- Focus on what you say, not how you format it. The content of your findings, particularly their breadth of relevance, matters more than structural or stylistic choices in the abstract.

Limitations

This is a correlational study. We cannot prove that any abstract feature causes higher citations. Papers with clinical relevance may simply report more impactful findings. Word count may correlate with journal prestige. These confounds are inherent in observational bibliometric research.

The dataset is limited to 600 papers in immunology, cell biology, and molecular biology from 2023. Results may differ in other fields, other years, or with larger samples. Citation counts from PubMed's elink API capture only citing articles indexed in PubMed, which may undercount total citations.

We also acknowledge that the median citation differences we observed are small (3 vs 4 vs 5). In a dataset where the median is 4 and the distribution is heavily right-skewed, small changes in median should be interpreted cautiously.

References

- Hartley J. Current findings from research on structured abstracts: an update. J Med Lib Assoc. 2014;102(3):146–148. doi:10.3163/1536-5050.102.3.002

- Falagas ME, Zarkali A, Karageorgopoulos DE, Bardakas V, Mavros MN. The impact of article length on the number of future citations: A bibliometric analysis of general medicine journals. PLoS ONE. 2013;8(2):e49476. doi:10.1371/journal.pone.0049476

- Plavén-Sigray P, Matheson GJ, Schiffler BC, Thompson WH. The readability of scientific texts is decreasing over time. eLife. 2017;6:e27725. doi:10.7554/eLife.27725

- Paiva CE, Lima JPSN, Paiva BSR. Articles with short titles describing the results are cited more often. Clinics. 2012;67(5):509–513. doi:10.6061/clinics/2012(05)17

- Letchford A, Moat HS, Preis T. The advantage of short paper titles. R Soc Open Sci. 2015;2(8):150266. doi:10.1098/rsos.150266

- Jamali HR, Nikzad M. Article title type and its relation with the number of downloads and citations. Scientometrics. 2011;88:653–661. doi:10.1007/s11192-011-0412-z

- Ball R. Scholarly communication in transition: The use of question marks in the titles of scientific articles in medicine, life sciences and physics 1966–2005. Scientometrics. 2009;79(3):667–679. doi:10.1007/s11192-007-1984-5

Frequently Asked Questions

Do structured abstracts get more citations?

Not in our dataset. Structured abstracts (n=151) had a median of 3 citations vs 4 for unstructured (n=449). The format of your abstract appears to matter less than what you say in it.

What abstract feature best predicts higher citations?

Clinical relevance language, mentioning therapeutic targets, biomarkers, diagnostic or prognostic potential, or patient outcomes. Abstracts with this language had a median of 5 citations vs 3 without.

How long should my abstract be?

Our data suggests 150–200 words is the sweet spot. Abstracts in this range had the highest median citations (5), compared to 3–4 for shorter or longer abstracts.

Does writing more readably increase citations?

Not for abstracts, based on our data. Flesch-Kincaid reading level showed no meaningful correlation with citations. Domain specialists appear to read at the technical level regardless.

Should I include p-values and sample sizes in my abstract?

Yes, for scientific rigour and transparency, but our data shows this alone does not strongly predict higher citations. The content of your results matters more than the quantitative scaffolding around them.

Get Your Abstract Optimised

We analyse your abstract for discoverability, keyword placement, readability, and clinical framing, backed by evidence like this study.

Submit Your Paper →